Glossary

This is the SSFWIKI glossary with limited dictionary function. See resources for examples you will often find linked for your convenience.

Glossaries elsewhere

- Glossary of Legislative Terms at congress.gov

- Glossary at parliament.uk

- Legal glossary at lawsociety.org.uk

- Online Sexual Exploitation and Abuse: a Glossary of Terms at equalitynow.org[1st seen in 1], an NGO founded in 1992, w:Equality Now

Association for Computing Machinery[edit | edit source]

The w:Association for Computing Machinery (ACM) is a US-based international w:learned society for w:computing. It was founded in 1947, and is the world's largest scientific and educational computing society. (Wikipedia)

See wikidata:Q127992 for translations, descriptions and links to WMF wikis about ACM.

Adequate Porn Watcher AI[edit | edit source]

Adequate Porn Watcher AI (concept) is a 2019 concept for an AI that would protect humans against visual synthetic filth by ripping the disinformation filth into revealing light.

Main article Adequate Porn Watcher AI (concept).

Dictionary entries

- English | en | English | Adequate Porn Watcher AI

- eesti | et | Estonian Piisav Pornovaataja AI on 2019. aasta tehisintellekti idee, mis kaitseks inimesi sünteetilise visuaalse saasta eest, rebides desinformatsioonisaasta valguse.

- suomi | fi | Finnish | Adequate Porn Watcher AI -tekoälykonsepti (eng) on 2019 konsepti tekoälystä, joka suojelisi ihmisiä synteettistä visuaalista saastaa repimällä disinformaatiosaastan paljastavaan valoon.

- français | fr | French | l’IA Observateur Adéquat de Porno (concept) (Adequate Porn Watcher AI en anglais) est une idée pour une IA qui protégerait les humains contre les saletés synthétiques visuelles en arrachent les saletés de désinformation en lumière révélatrice.

- svenska | sv | Swedish Adekvat Porr Tittare AI är en idé från 2019 om artificiell intelligens som skulle skydda människor från syntetisk visuell orenhet genom att riva desinformationsorenhet till avslöjande ljus.

Appearance and voice theft[edit | edit source]

Appearance is thieved with digital look-alikes and voice is thieved with digital sound-alikes. These are new and very extreme forms of identity theft. Ban covert modeling and possession and doing anything with a model of a human's voice, but don't ban the Adequate Porn Watcher AI (concept).

Audio forensics[edit | edit source]

“w:Audio forensics is the field of w:forensic science relating to the acquisition, analysis, and evaluation of w:sound recordings that may ultimately be presented as admissible evidence in a court of law or some other official venue.[1][2][3][4]”

Bidirectional reflectance distribution function[edit | edit source]

“The bidirectional reflectance distribution function (BRDF) is a function of four real variables that defines how light is reflected at an opaque surface. It is employed in the optics of real-world light, in computer graphics algorithms, and in computer vision algorithms.”

A BRDF model is a 7 dimensional model containing geometry, textures and reflectance of the subject.

The seven dimensions of the BRDF model are as follows:

- 3 cartesian X,Y,Z

- 2 for the entry angle

- 2 for the exit angle of the light.

See wikidata:Q856980 for translations, descriptions and links to WMF wikis about BRDF.

Burqa[edit | edit source]

“A burqa, also known as chadri or paranja in Central Asia, is an enveloping outer garment worn by women in some Islamic traditions to cover themselves in public, which covers the body and the face.”

See wikidata:Q167884 for translations, descriptions and links to WMF wikis about burqas.

Covert modeling[edit | edit source]

Covert modeling refers to both covertly modeling aspects of a subject i.e. without express consent.

Main known cases are

- Covertly modeling the human appearance into 7-dimensional Bidirectional reflectance distribution function model or other type of model.

- Covertly modeling the human voice

There is work ongoing to model e.g. human's style of writing, but this is probably not as drastic a threat as the covert modeling of appearance and of voice.

Cyberbullying[edit | edit source]

- w:Cyberbullying or cyberharassment is a form of w:bullying or w:harassment using w:electronic means. Cyberbullying and cyberharassment are also known as online bullying. (Wikipedia)

DARPA[edit | edit source]

The Defense Advanced Research Projects Agency (w:DARPA) is an agency of the w:United States Department of Defense responsible for the development of emerging technologies for use by the military. (Wikipedia)

- DARPA program: 'Media Forensics (MediFor)' at darpa.mil since 2016

- DARPA program: 'Semantic Forensics (SemaFor) at darpa.mil since 2019

See wikidata:Q207361 for translations, descriptions and links to WMF wikis about DARPA.

Deepfake[edit | edit source]

Man of Steel produced by DC Entertainment and Legendary Pictures, distributed by Warner Bros. Pictures. Modification done by Reddit user "derpfakes".

This is a sample from a copyrighted video recording. The person who uploaded this work and first used it in an article, and subsequent people who use it in articles, assert that this qualifies as fair use.

“Deepfake (a portmanteau of "deep learning" and "fake") is a technique for human image synthesis based on artificial intelligence. It is used to combine and superimpose existing images and videos onto source images or videos using a machine learning technique called a "generative adversarial network" (GAN).”

See wikidata:Q49473179 for translations, descriptions and links to WMF wikis about deepfakes.

Digital look-alike[edit | edit source]

When the camera does not exist, but the subject being imaged with a simulation of a (movie) camera deceives the watcher to believe it is some living or dead person it is a digital look-alike. Alternative term is look-like-anyone-machine.

Saying "digital look-alike of X" would imply possession, but "digital look-alike made of X" is more suited, unless the target really is in possession of it.

Dictionary entries

- English | en | English | Digital look-alikes

- eesti | et | Estonian | digitaalsed duplikaadid

- suomi | fi | Finnish | digitaaliset kaksoiskuvajaiset

- français | fr | French | les sosies numériques

- svenska | sv | Swedish | digitala dupletter

Digital sound-alike[edit | edit source]

When it cannot be determined by human testing, is some synthesized recording a simulation of some person's speech, or is it a recording made of that person's actual real voice, it is a pre-recorded digital sound-alike. Alternative term is sound-like-anyone-machine.

Dictionary entries

- English | en | English | Digital sound-alikes

- eesti | et | Estonian | digitaalsed topelthelid

- suomi | fi | Finnish | digitaaliset kaksoisäänet

- français | fr | French | les sonne-mêmes numeriques

- svenska | sv | Swedish | digitala dubbla ljud

Generative artificial intelligence[edit | edit source]

“w:Generative artificial intelligence or generative AI (also GenAI) is a type of w:artificial intelligence (AI) system capable of generating text, images, or other media in response to w:prompts.”

Examples of generative AI that generate images / sceneries based on w:prompts by user. These genrated images may contain synthetic human-like fakes placed in fairly realistic looking scenarios.

Examples of w:large language models being used to generate conversational AIs

- w:ChatGPT by OpenAI based on OpenAI's w:Generative pre-trained transformer (GPT)

- w:Bard (chatbot) by Google and based on Google's w:LaMDA

Generative AI encompasses more than conversational AI as it is able to also create images / scenery

Generative adversial network[edit | edit source]

“A generative adversarial network (GAN) is a class of g systems. Two neural networks contest with each other in a zero-sum game framework. This technique can generate photographs that look at least superficially authentic to human observers,[5] having many realistic characteristics. It is a form of unsupervised learning]].[6]”

See wikidata:Q25104379 for translations, descriptions and links to WMF wikis about GANs.

Human-like image synthesis[edit | edit source]

Human-like image synthesis is a dangerous technology. Human-like image synthesis would be semantically less wrong less wrong than w:human image synthesis.

“Human image synthesis can be applied to make believable and even photorealistic of human-likenesses, moving or still. This has effectively been the situation since the early 2000s. Many films using computer generated imagery have featured synthetic images of human-like characters digitally composited onto the real or other simulated film material.”

See wikidata:Q17118711 for translations, descriptions and links to WMF wikis about human image synthesis and please contribute.

Institute for Creative Technologies[edit | edit source]

The Institute for Creative Technologies was founded in 1999 in the University of Southern California by the United States Army. It collaborates with the w:United States Army Futures Command, w:United States Army Combat Capabilities Development Command, w:Combat Capabilities Development Command Soldier Center and w:United States Army Research Laboratory.

See wikidata:Q6039265 for translations, descriptions and links to WMF wikis about ICT.

Large language model[edit | edit source]

“A w:large language model (LLM) is a w:language model consisting of a w:neural network with many parameters (typically billions of weights or more), trained on large quantities of unlabeled text using w:self-supervised learning or w:semi-supervised learning.”

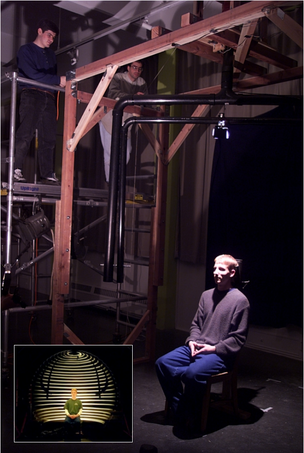

Light stage[edit | edit source]

It consists of two rotary axes with height and radius control. Light source and a polarizer were placed on one arm and a camera and the other polarizer on the other arm.

Original image by Debevec et al. – Copyright ACM 2000 – https://dl.acm.org/citation.cfm?doid=311779.344855 – Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page.

“A light stage or light cage is equipment used for shape, texture, reflectance and motion capture often with structured light and a multi-camera setup.”

See wikidata:Q17097238 for translations, descriptions and links to WMF wikis about light stages.

MATINE[edit | edit source]

MATINE (w:fi:MATINE) is the Scientific Advisory Board for Defence of the w:Ministry of Defence of Finland. MATINE is an abbreviation of MAanpuolustuksen TIeteellinen NEuvottelukunta and it arranges an annual public research seminar. In 2019 a research group funded by MATINE presented their work 'Synteettisen median tunnistus' at defmin.fi (Recognizing synthetic media).

As of 2021-08-11 There is no wikidata item for translations, descriptions and links to WMF wikis about MATINE.

Media forensics[edit | edit source]

Media forensics deal with ascertaining genuinity of media.

“Wikipedia does not have an article on media forensics, but it does have one on w:audio forensics”

Niqāb[edit | edit source]

“A niqab or niqāb ("[face] veil"; also called a ruband) is a garment of clothing that covers the face, worn by some muslim women as a part of a particular interpretation of hijab (modest dress).”

See wikidata:Q210583 for translations, descriptions and links to WMF wikis about niqābs.

No camera[edit | edit source]

No camera (!) refers to the fact that a simulation of a camera is not a camera. If people realize the differences, and thus the different restrictions by many types of laws e.g. physics, physiology. Analogously see #No microphone, usually seen below this entry.

No microphone[edit | edit source]

No microphone is needed when using synthetic voices as you just model them, without needing to capture. Analogously see the entry #No camera, usually seen above this entry.

Reflectance capture[edit | edit source]

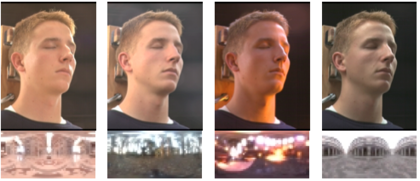

Reflectance capture is made by measuring the reflected light for each incoming light direction and every exit direction, often with many different wavelengths. Using polarisers allow to separately capture the specular and the diffuse reflected light. The first known reflectance capture over the human face was made in 1999 by Paul Debevec et al at the w:University of Southern California.

As of 2020-11-19 Wikipedia does not have an article on reflectance capture.

Relighting[edit | edit source]

Original image Copyright ACM 2000 – http://dl.acm.org/citation.cfm?doid=311779.344855 – Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page.

Relighting means applying a completely different w:lighting situation to an image or video which has already been imaged. As of 2020-09 the English Wikipedia does not have an article on relighting.

Since 2021-03-15 a w:Relighting is a redirect to w:Polynomial texture mapping.

w:Polynomial texture mapping (PTM), also known as Reflectance Transformation Imaging (RTI), is a technique of w:digital imaging and w:interactively displaying objects under varying w:lighting conditions to reveal surface phenomena. (Wikipedia)

Past: As of 2020-11-19 Wikipedia does not have an article on relighting.

Sexual bullying[edit | edit source]

- w:Sexual bullying is a type of w:bullying and w:sexual harassment that occurs in connection with a person's w:sex, body, w:sexual orientation or with w:sexual activity. It can be w:physical, w:verbal, and/or w:emotional abuse. (Wikipedia)

SISE[edit | edit source]

SISE refers to the #Stopping Internet Sexual Exploitation Act - a House of Commons of Canada bill introduced in 2022.

SISEA[edit | edit source]

- #Stop Internet Sexual Exploitation Act - a US Senate bill in the 2019-2020 session

Spectrogram[edit | edit source]

w:Spectrograms are used extensively in the fields of w:music, w:linguistics, w:sonar, w:radar, w:speech processing, w:seismology, and others. Spectrograms of audio can be used to identify spoken words phonetically, and to analyse the various calls of animals. (Wikipedia)

See wikidata:Q103865657 for translations, descriptions and links to WMF wikis about spectrograms.

Speech synthesis[edit | edit source]

“Speech synthesis is the artificial production of human speech”

See wikidata: for translations, descriptions and links to WMF wikis about speech syntheses.

Stop Internet Sexual Exploitation Act[edit | edit source]

The Stop Internet Sexual Exploitation Act (SISE) was a bill introduced to the 2019-2020 session of the US Senate.

Stopping Internet Sexual Exploitation Act[edit | edit source]

- House of Commons of Canada bill C-270 'Stopping Internet Sexual Exploitation Act' at parl.ca, first read to the Commons on Thursday 2022-04-28. According to townandcountrytoday.com the author of the bill introduced an identical bill C-302 on Thursday 2021-05-27, but that got killed off by oncoming federal elections

Synthetic pornography[edit | edit source]

Synthetic pornography is a strong technological hallucinogen.

Synthetic terror porn[edit | edit source]

Synthetic terror porn is pornography synthesized with terrorist intent. Synthetic rape porn is probably by far the most prevalent form of this, but it must be noted that synthesizing concensual looking sex scenes can also be terroristic in intent and effect.

Transfer learning[edit | edit source]

“Transfer learning (TL) is a research problem in machine learning (ML) that focuses on storing knowledge gained while solving one problem and applying it to a different but related problem.”

See wikidata:Q6027324 for translations, descriptions and links to WMF wikis about transfer learning.

Voice changer[edit | edit source]

“The term voice changer (also known as voice enhancer) refers to a device which can change the tone or pitch of or add distortion to the user's voice, or a combination and vary greatly in price and sophistication.”

Please see Resources#List of voice changers for some alternatives.

See wikidata:Q4224062 for translations, descriptions and links to WMF wikis about voice changers.

Permalinks[edit | edit source]

- ↑ https://en.wikipedia.org/w/index.php?title=Audio_forensics&oldid=1096442057 loaned on Friday 2022-10-21

References[edit | edit source]

- ↑ Phil Manchester (January 2010). "An Introduction To Forensic Audio". Sound on Sound.

- ↑ Maher, Robert C. (March 2009). "Audio forensic examination: authenticity, enhancement, and interpretation". IEEE Signal Processing Magazine. 26 (2): 84–94. doi:10.1109/msp.2008.931080. S2CID 18216777.

- ↑ Alexander Gelfand (10 October 2007). "Audio Forensics Experts Reveal (Some) Secrets". Wired Magazine. Archived from the original on 2012-04-08.

- ↑ Maher, Robert C. (2018). Principles of forensic audio analysis. Cham, Switzerland: Springer. ISBN 9783319994536. OCLC 1062360764.

- ↑ Goodfellow, Ian; Pouget-Abadie, Jean; Mirza, Mehdi; Xu, Bing; Warde-Farley, David; Ozair, Sherjil; Courville, Aaron; Bengio, Yoshua (2014). "Generative Adversarial Networks". arXiv:1406.2661 [cs.LG].

- ↑ Salimans, Tim; Goodfellow, Ian; Zaremba, Wojciech; Cheung, Vicki; Radford, Alec; Chen, Xi (2016). "Improved Techniques for Training GANs". arXiv:1606.03498 [cs.LG].